Few-shot prompting (FSP) is a technique for guiding the LLM by showing it examples of what users might say – and how the agent should respond. This helps the agent:

- Match vague or unexpected inputs to the correct tool call

- Extract values in tricky formats (e.g., spelled names, long reference codes)

- Avoid asking unnecessary questions when the value is already present

- Maintain a consistent tone, phrasing, or logic pattern

Where you can use few-shot prompting

FSP works anywhere the LLM reads a prompt. The most common places in Agent Studio are:

- Flow step prompts – the step prompt field in the Flow Editor

- Topic actions – the action prompt within a managed topic

- Agent behavior prompts – global rules that shape the agent’s overall behavior

The examples on this page use flow steps, but the same principles apply wherever you write prompts.

Why it matters

In a flow, the agent only sees:

- The current step prompt

- The listed functions (names, descriptions, arguments)

It does not see previous step prompts or conversation state unless you surface them.

Each step must stand alone. Few-shot prompting fills in the gaps by giving the model examples to reason from.

Because step prompts are inserted last in the LLM input stack, FSP examples appear directly before the model generates its next turn – making them highly influential.

Basic structure

Each few-shot example consists of:

- A realistic user message

- A matching agent behavior – often a response + tool call

Place these inside the prompt, either inline or at the top before your main instructions.

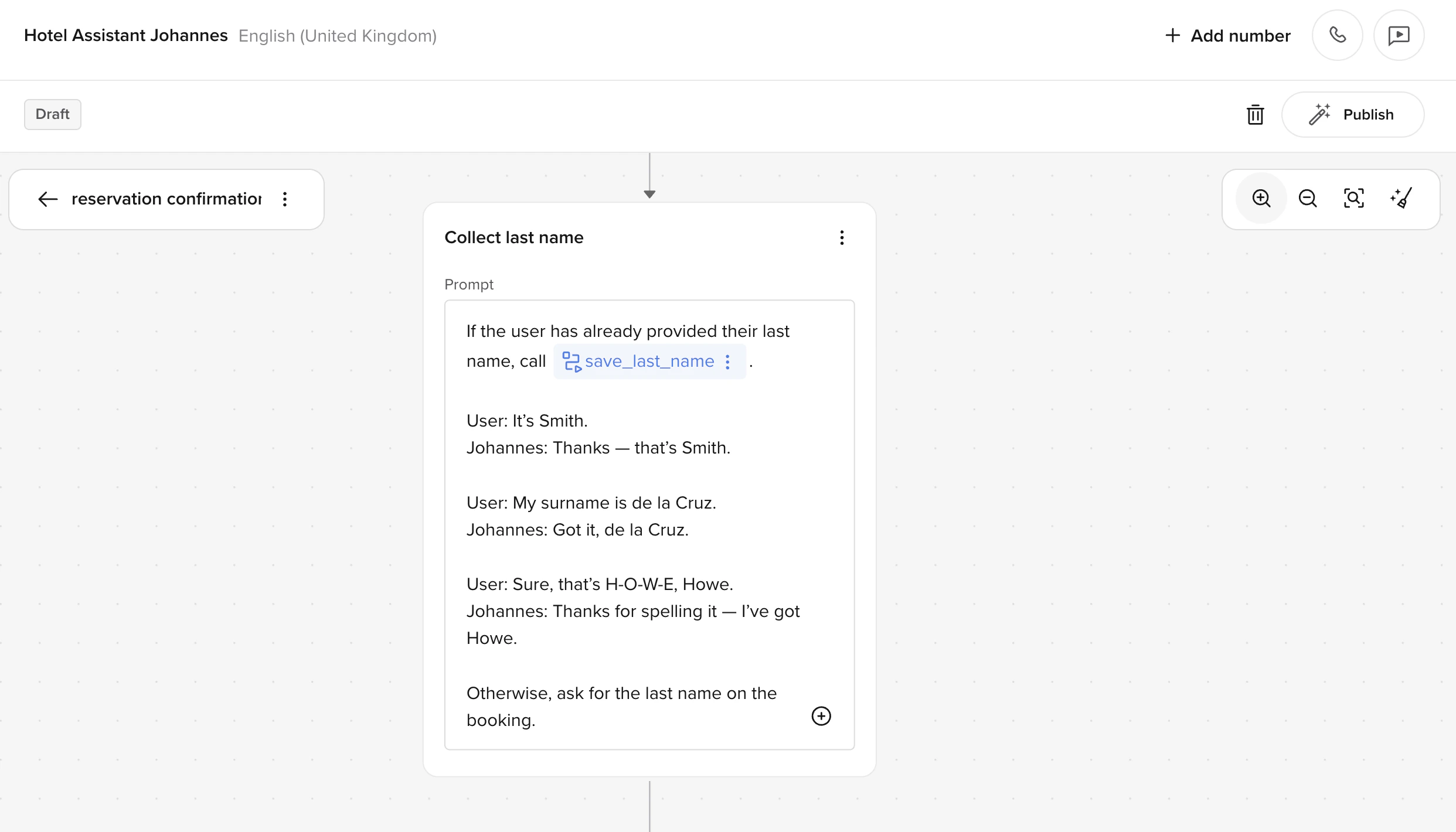

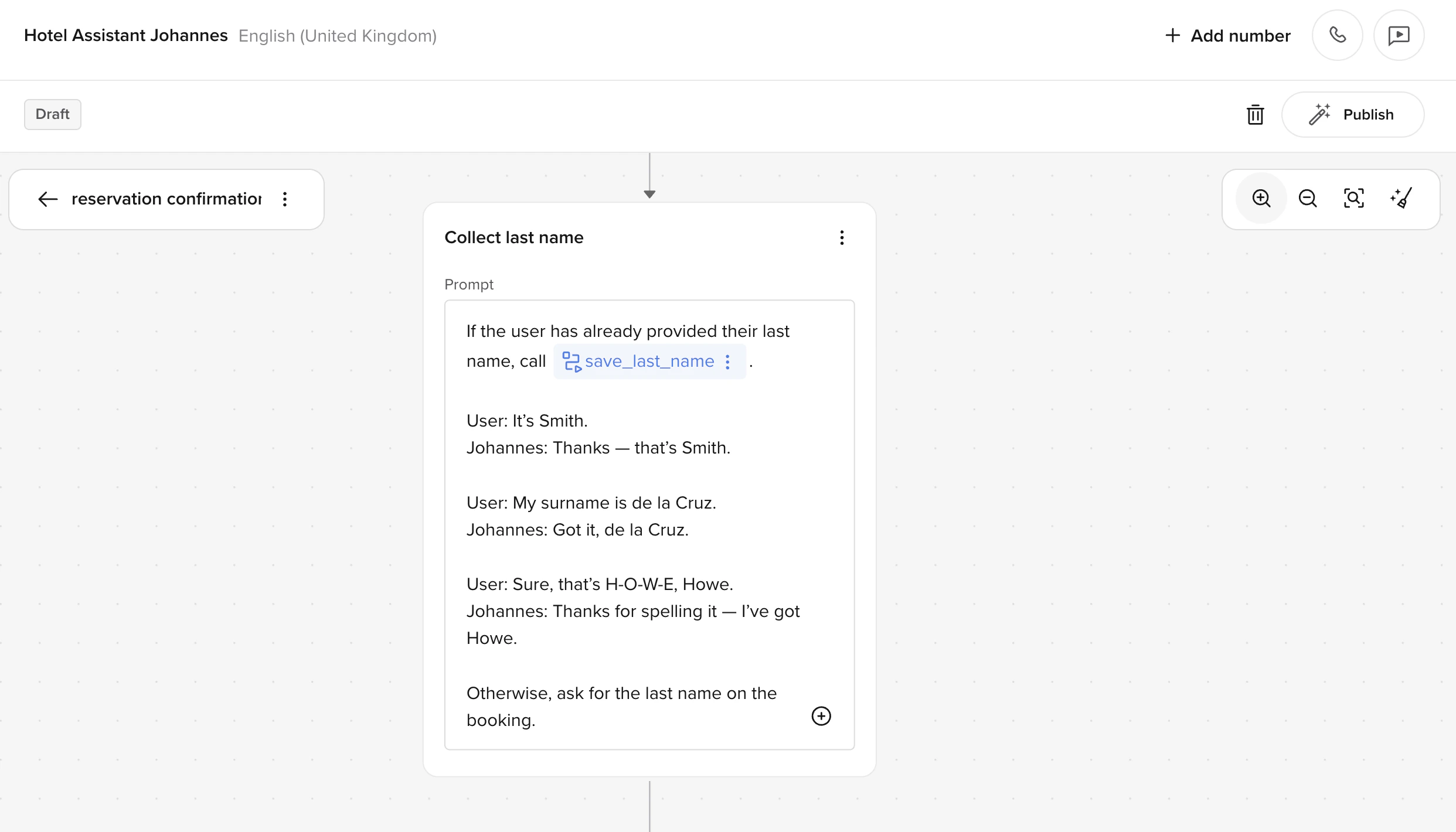

Here’s what a set of few-shot examples looks like inside a “Collect last name” step prompt:

User: It's Smith.

Agent: Thanks – that's Smith. [call save_last_name("Smith")]

User: My surname is de la Cruz.

Agent: Got it, de la Cruz. [call save_last_name("de la Cruz")]

User: Sure, that's H-O-W-E. Howe.

Agent: Thanks for spelling it – I've got Howe. [call save_last_name("Howe")]

You don’t need dozens of examples – 2–5 is usually enough, especially if you cover:

You don’t need dozens of examples – 2–5 is usually enough, especially if you cover:

- A standard, clean input

- A tricky edge case (e.g., multi-word names, spelled-out values)

- A fallback or clarification

- An input that’s already been provided earlier in the conversation

Too many examples can make the model too rigid or cause it to overfit to specific cases. If the agent starts parroting your examples word-for-word instead of generalising, reduce the number of examples or make them more varied.

Tips for strong few-shot examples

- Use realistic language – write examples that sound like actual callers, not idealized or overly formal phrasing.

- Show both success and edge cases – include at least one tricky input so the model handles edge cases correctly.

- Match the agent’s persona – if the agent has a name and tone, use them consistently in the example responses.

- Pair responses with tool calls – show the model exactly which function to call and with what arguments.

- Keep examples independent – each example should stand alone. Don’t build a sequence where example 2 depends on example 1.

What to avoid

- Mixing FSP examples with conditional logic – keep your few-shot examples separate from

if/else style instructions in the same prompt. Mixing them confuses the model about what’s an example versus what’s a rule.

- Using too many examples – more than 5 examples rarely helps and can cause overfitting. Start with 2–3 and add more only if the agent struggles with specific cases.

- Copying examples between steps – each step has different functions and goals. Tailor your examples to the specific step they live in.

- Using placeholder data – avoid generic values like “John Doe” or “123”. Use realistic but varied values that reflect what real callers say.